Discover AI Clinical Errors in 2026: 5 Critical Questions That Determine Who Is Legally Responsible. who bears legal responsibility while AI makes medical blunders in 2026. Essential insights for nurses, physicians, hospitals, and healthcare era developers.

5 Critical Questions That Determine Who Is Legally Responsible: AI Clinical Errors in 2026

Introduction

Artificial intelligence is reshaping medical decision-making at a tempo that has outrun the felony frameworks designed to manipulate it. From diagnostic imaging algorithms and sepsis prediction gear to AI-assisted medicinal drug dosing structures, that technology are actually embedded in day by day medical workflows throughout U.S. hospitals and fitness structures. Yet while an AI device produces mistaken advice that harms an affected person, the query of who bears felony duty stays one of the maximums contested and unresolved troubles in contemporary-day healthcare regulation.

According to the American Medical Association (AMA) Digital Medicine Health Equity Report 2023, AI adoption in medical settings is accelerating quicker than regulatory and legal responsibility systems can adapt, growing a risky duty hole that each healthcare expert needs to recognize in 2025.

The Liability Landscape: Why AI Clinical Errors Are Legally Unprecedented

Traditional clinical malpractice regulation is constructed at the idea of an obligation of care owed through an identifiable human clinician to a affected person. When that obligation is breached and damage effects, legal responsibility attaches to the character that made — or did not make — the perfect medical judgment. AI essentially disrupts this framework due to the fact the entity producing the medical advice is neither an authorized expert nor a felony man or woman able to bear legal responsibility with inside the traditional sense.

Legal pupil and bioethicist I. Glenn Cohen of Harvard Law School has written substantially at the legal responsibility vacuum created through AI in medicine, arguing that modern tort regulation turned into by no means designed to adjudicate damage because of algorithmic structures working inside medical environments. As AI gear passes from passive decision-help capabilities in the direction of energetic medical recommendations — flagging deteriorating patients, suggesting diagnoses, or producing remedy pathways — the felony publicity of each human stakeholder in that procedure concurrently expands. Understanding who sits inside that publicity is the primary project for healthcare regulation in 2025.

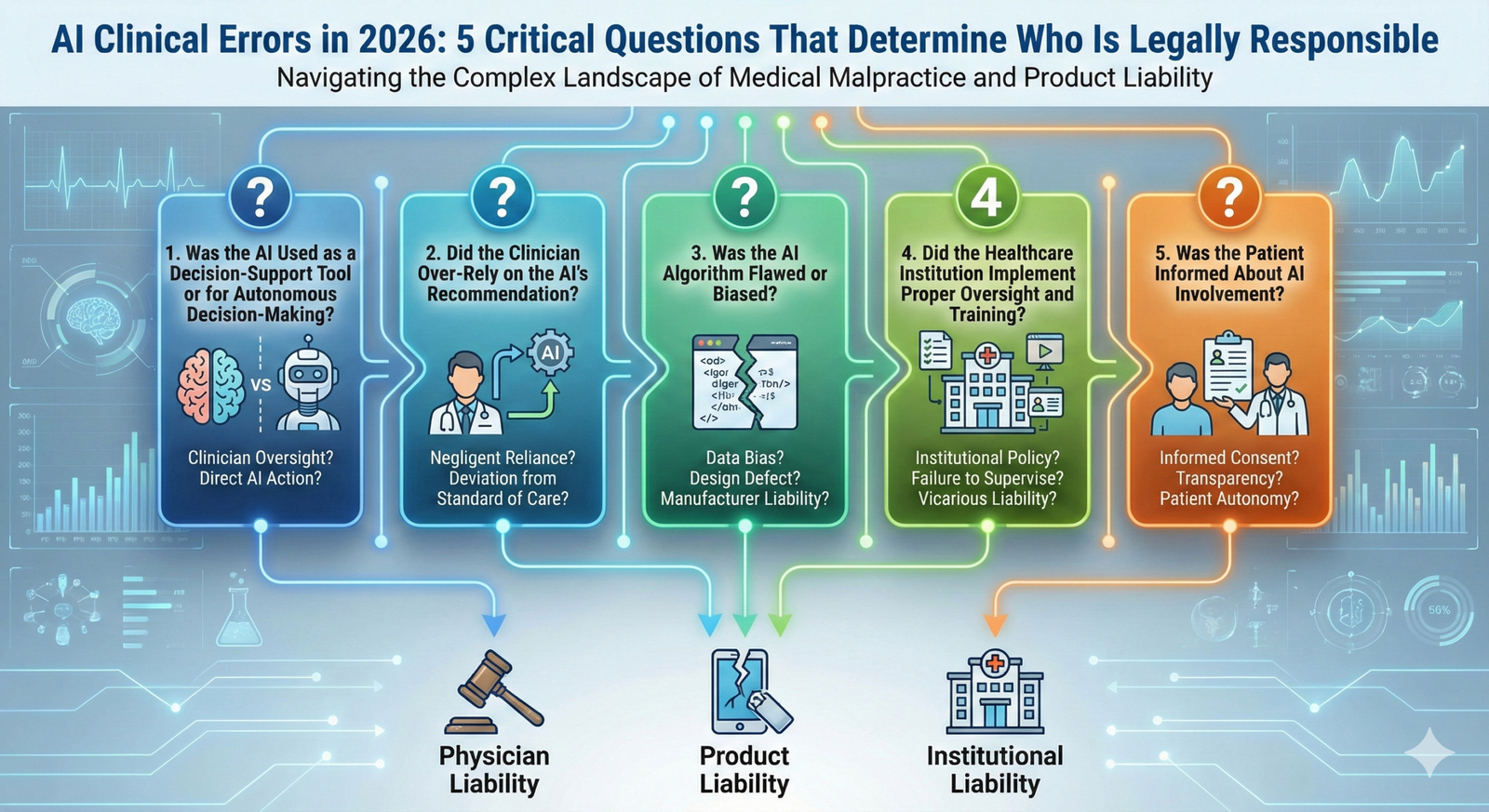

Question 1: Is the Clinician Who Used the AI Tool Liable?

The maximum instantaneously and often litigated supply of AI-associated medical legal responsibility is the treating clinician — the physician, nurse practitioner, or different certified issuer who acted on, or did not query, an AI-generated advice. Courts and kingdom clinical forums had been steady on one foundational principle: the lifestyles of an AI device do now no longer switch or dilute a clinician`s expert obligation of care to the affected person.

Under the same old care doctrine, a clinician is predicted to work out the judgment of a capable expert in their field. Blindly following AI advice without making use of impartial medical reasoning — mainly while affected person’s signs and symptoms or looking at effects endorse a discrepancy — can represent negligence. Conversely, overriding AI advice without documented medical justification also could generate legal responsibility if the AI turned into accurate and the override contributed to affected person damage. The clinician stays the very last decision-maker, and with inside the eyes of the regulation, that function includes complete duty no matter which era assisted with inside the procedure.

Question 2: Can the Hospital or Health System Be Held Responsible?

Healthcare establishments that installation AI scientific gear deliver their personal unbiased layer of legal responsibility below the criminal doctrine of company negligence, a fashionable that holds hospitals responsible for the structures, policies, and technology they put into effect in affected person care settings. The landmark case Darling v. Charleston Community Memorial Hospital mounted the precept that hospices have a non-delegable responsibility to make sure the protection of the care environment — a responsibility that extends logically to the AI structures now running inside that environment.

If a health facility deploys an AI device without good enough personnel training, fails to set up protocols for clinicians override documentation, or keeps the usage of a gadget after recognized mistakes have been reported, the organization itself will become a number one defendant in any ensuing malpractice action. The Joint Commission`s 2023 steerage on fitness records generation protection explicitly identifies AI-associated gadget screw ups as a class of sentinel occasion risk, reinforcing institutional responsibility as a non-negotiable aspect of accountable AI deployment in scientific settings.

Question 3: Does the AI Developer or Vendor Bear Legal Liability?

Product legal responsibility regulation gives one of the clearest frameworks for containing AI builders and generation carriers responsible for scientific mistakes due to their structures. Under strict product legal responsibility doctrine, a manufacturer — or with inside the AI context, a developer — may be held responsible for damage as a result of a faulty product irrespective of whether or not negligence may be proven. Defects may be categorized as layout defects, production defects, or screw ups to warn — every of which maps onto identifiable failure modes in scientific AI structures.

An AI diagnostic device that became educated on a non-consultant dataset and produces systematically biased tips for positive affected person populations represents a layout disorder. A gadget that plays as it should be in managed validation an environment, however, degrades in real-international scientific situations can also additionally mirror a production or deployment disorder. A seller that fails to appropriately expose the recognized barriers of its set of rules to the fitness structures that shopping the product faces failure-to-warn legal responsibility. The Food and Drug Administration’s (FDA) evolving regulatory framework for Software as a Medical Device (SaMD) is an increasing number of shaping the evidentiary requirements via way of means of which those disorder claims are evaluated in litigation.

Question 4: How Does Shared Liability Work in AI-Assisted Clinical Errors?

In maximum real-global AI medical blunders scenarios, legal responsibility does now no longer relaxes with a unmarried party — it’s far allotted throughout more than one defendant whose contributions to the damage need to be apportioned with the aid of using a courtroom docket or jury. This shared legal responsibility framework, ruled with the aid of using concepts of comparative fault in maximum U.S. jurisdictions, approach that an affected person harmed with the aid of using an AI medical blunder might also additionally concurrently pursue claims towards the treating clinician, the using group, and the AI developer.

The undertaking for plaintiffs and protection lawyers alike is organizing the correct causal chain: did the AI generate an unsuitable output, did the clinician fail to accurately scrutinize it, or did the group fail to offer the schooling and protocols essential for secure use? Expert testimony from medical informaticists, felony nurse consultants, and AI ethics professionals has emerged as an increasingly vital element of those cases, and the absence of clean industry-extensive requirements for AI medical overall performance creates large evidentiary complexity that favors well-documented medical selection statistics at a part of man or woman providers.

Question 5: What Role Does Informed Consent Play in AI Clinical Liability?

A rising measurement of AI medical legal responsibility this is unexpectedly gaining traction in each educational literature and courtrooms are the query of affected person knowledgeable consent concerning AI involvement in medical selection-making. Traditional knowledgeable consent doctrine calls for that sufferers be knowledgeable of the character of the remedy or diagnostic technique they may be undergoing, along with fabric risks. If AI structures are producing hints that materially have an impact on medical decisions, a compelling felony and moral argument exists that sufferers have a proper to recognize approximately that involvement.

The American College of Physicians (ACP) Ethics Manual, Seventh Edition, helps the precept that transparency with inside the use of medical selection-assist gear is steady with the moral duty of veracity with inside the physician-affected person relationship. Several kingdom legislatures are actively thinking about knowledgeable consent regulations that could require express disclosure of AI involvement in prognosis and remedy planning. Clinicians and establishments that fail to assume and deal with this evolving preferred face now no longer handiest moral publicity however an increasing knowledgeable consent legal responsibility hazard this is awesome from — and cumulative with — conventional malpractice claims.

How Healthcare Professionals Can Protect Themselves Today

Given the modern-day nation of felony uncertainty surrounding AI medical legal responsibility, healthcare experts at each stage have a realistic and expert responsibility to take proactive shielding measures. Clinicians have to file their impartial medical reasoning on every occasion performing on or overriding an AI recommendation, developing a clean contemporaneous document that demonstrates the exercising of expert judgment. Institutions must set up written AI governance guidelines that outline schooling requirements, override protocols, and destructive occasion reporting processes for each AI medical device in energetic deployment.

Individual nurses and allied fitness experts — who engage with AI-generated signals and tips as a part of habitual workflow — must affirm that their private expert legal responsibility coverage guidelines cope with AI-assisted medical scenarios, as many fashionable guidelines had been written earlier than those gears have become prevalent. NSO and comparable vendors are starting to replace coverage language to mirror AI-associated legal responsibility, however nurses and superior exercise vendors ought to especially verify this insurance is in place. Professional businesses along with the ANA, the American Association of Nurse Practitioners (AANP), and the AMA are actively growing role statements and exercise tips on AI responsibility that everyone clinicians must screen and combine into their exercise.

Conclusion

The query of who’s legally accountable while AI makes a medical blunders does now no longer but have a single, settled solution in U.S. regulation — and that ambiguity itself represents one of the maximum big affected person protection and expert legal responsibility dangers of 2025. Clinicians maintain their obligation of care no matter algorithmic assistance, establishments endure company responsibility for the gear they deploy, builders face product legal responsibility publicity for wrong systems, and knowledgeable consent requirements are unexpectedly increasing to embody AI transparency obligations.

For nurses, physicians, medical institution administrators, healthcare regulation experts, and nursing educators making ready the subsequent era of clinicians, the vital is clean: AI medical legal responsibility isn’t a destiny concern — it’s far a gift and urgent truth that needs instantaneous expert awareness, documentation discipline, and coverage engagement at each stage of healthcare exercise.

Frequently Asked Questions

FAQ 1: Can a nurse be held personally liable for following an AI clinical recommendation that harms a patient?

Yes. Nurses preserve their expert responsibility of care irrespective of AI involvement with inside the decision-making process. If a nurse follows AI advice without making use of impartial medical judgment — mainly whilst contradictory medical symptoms and symptoms are present — that failure to impeach the advice can represent negligence below the relevant popular of care.

FAQ 2: Is there presently a federal regulation that particularly governs AI medical legal responsibility with inside the United States?

No complete federal statute particularly addresses AI medical legal responsibility as of 2025. The FDA regulates AI-primarily based totally Software as a Medical Device (SaMD) below present clinical tool frameworks, and legal responsibility is presently adjudicated below country tort regulation. Federal legislative proposals addressing AI duty in healthcare are below energetic congressional attention, however, have no longer been enacted.

FAQ 3: What documentation must clinicians preserve whilst using AI medical decision-aid tools?

Clinicians must file their impartial medical reasoning with inside the affected person document every time an AI advice affects a medical decision — whether or not accompanied or overridden. This contemporaneous document demonstrates the workout of expert judgment and is the only character safety towards AI-associated malpractice claims at some point of litigation or board investigations.

FAQ 4: Are AI builders legally required to reveal the constraints in their medical algorithms to hospitals?

Under rising FDA SaMD policies and product legal responsibility failure-to-warn doctrine, builders have a duty to reveal recognized obstacles, validation information gaps, and meant use barriers to healthcare establishments deploying their tools. Failure to offer ok disclosure can shape the premise of a product legal responsibility declared if undisclosed obstacles make contributions to affected person harm.

Read More:

https://nurseseducator.com/didactic-and-dialectic-teaching-rationale-for-team-based-learning/

https://nurseseducator.com/high-fidelity-simulation-use-in-nursing-education/

First NCLEX Exam Center In Pakistan From Lahore (Mall of Lahore) to the Global Nursing

Categories of Journals: W, X, Y and Z Category Journal In Nursing Education

AI in Healthcare Content Creation: A Double-Edged Sword and Scary

Social Links:

https://www.facebook.com/nurseseducator/

https://www.instagram.com/nurseseducator/

https://www.pinterest.com/NursesEducator/

https://www.linkedin.com/company/nurseseducator/

https://www.linkedin.com/in/afzalaldin/

https://www.researchgate.net/profile/Afza-Lal-Din

https://scholar.google.com/citations?hl=en&user=F0XY9vQAAAAJ